Atom Humanoid

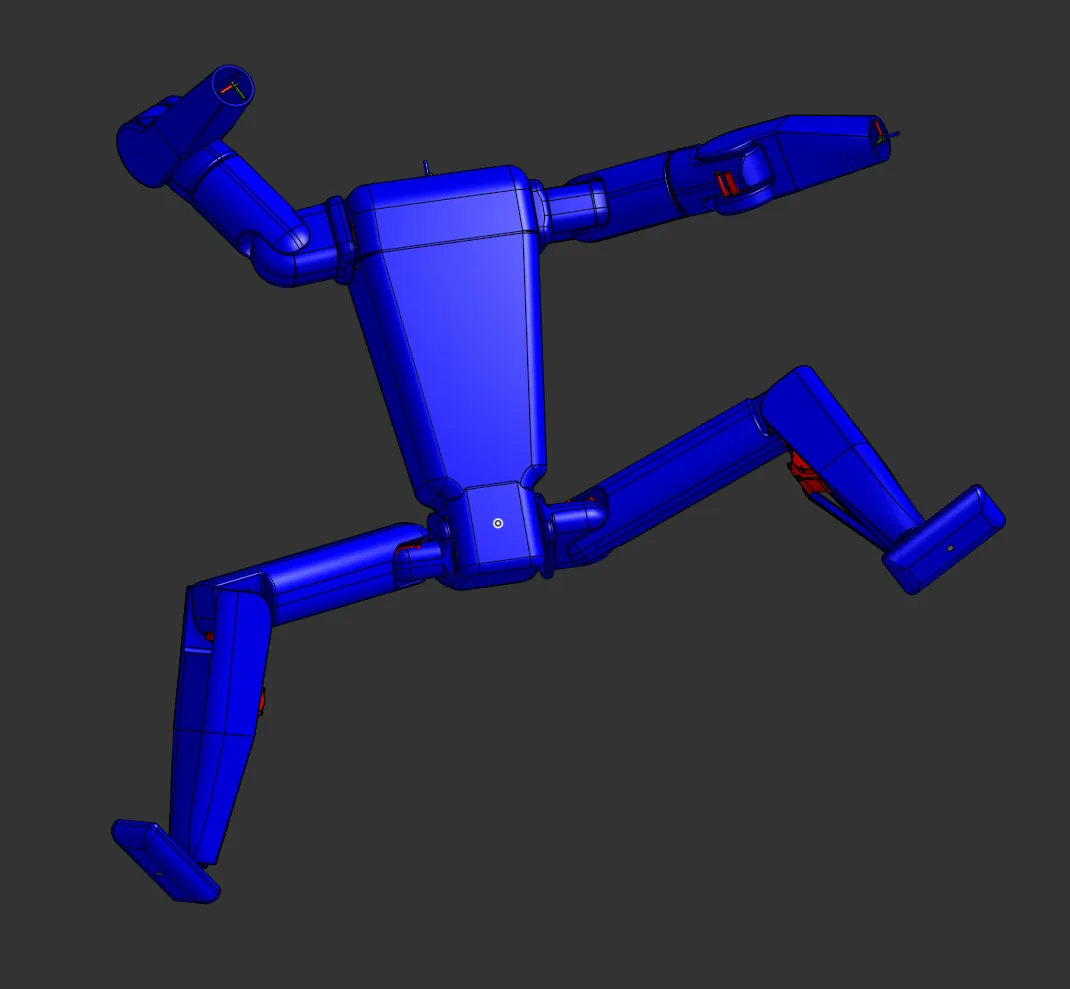

A 16-DoF, 5'6" bipedal robot designed from scratch for general home tasks, with a custom CAD-to-simulation pipeline and PPO locomotion policies trained in NVIDIA Isaac Lab across 58+ runs.

Atom is a 16-DoF bipedal robot I’m designing from scratch with the eventual goal of doing the kinds of small jobs that fill up a Saturday: walking room to room, going up and down stairs, reaching the top shelf, unloading the dishwasher, moving a laundry basket. Right now it lives entirely in simulation. The work so far has been mechanical CAD, a custom CAD-to-URDF export pipeline, and a stack of reinforcement-learning locomotion policies trained in NVIDIA Isaac Lab. The plan is to build the first physical version once the sim policies are stable enough to be worth the bent metal for.

The project is ongoing. Nothing I have today is good enough to put under real hardware. The most stable current policy walks the full episode but with a hip waddle and locked knees that I wouldn’t trust on stairs or uneven ground, and a meaningful share of the next several months of work is more sim training to fix that.

The whole stack runs on Linux. Part of that is Isaac Lab’s native home, but more of it is preparation: the eventual real robot will be running ROS 2 on a Jetson, and doing the simulation work on the same OS as the deployment target makes the eventual sim-to-real transfer one less moving part. Living in the Linux toolchain day to day, including udev, systemd, conda envs, X11 versus Wayland for headed sim, and the things that go wrong with NVIDIA drivers, is also just useful familiarization for when there’s a real robot to bring up.

CAD is publicly viewable on OnShape: Atom Humanoid Assembly.

Design Intent

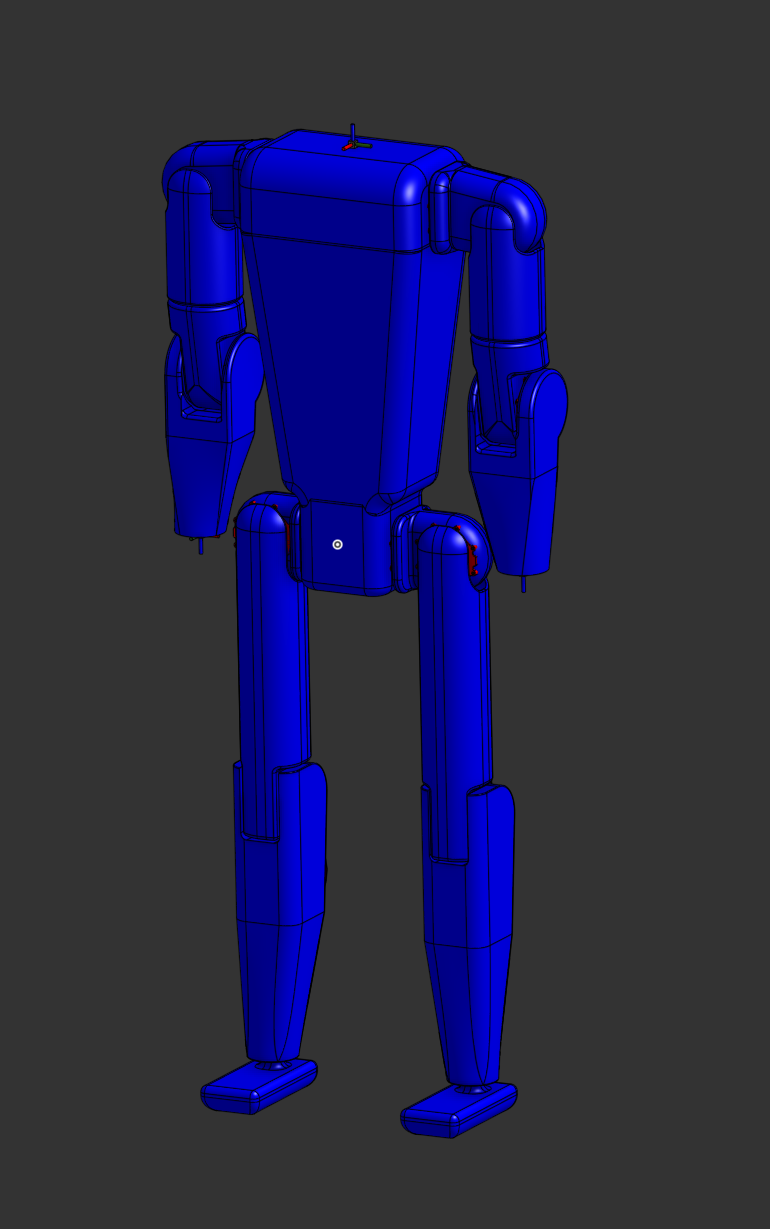

Two design rules drove most of the geometry. First, as few degrees of freedom as possible. The more joints there are, the more there is to design, fabricate, wire, and tune, and the longer it takes to get to a working robot. Second, anthropometric proportions for a 5’6” body. Useful working heights in a kitchen, the depth of a stair tread, and the height of a typical countertop are all tuned to people, and matching them is cheaper than redesigning the world.

The link lengths come straight from anthropometric tables for that height: 38 cm shin, 40 cm thigh, 29 cm upper arm, 24 cm forearm. Shoulder height lands at 137 cm and the maximum upward reach is 207 cm, which clears the top of a standard kitchen cabinet without standing on tip-toes. Shoulder width is 37 cm, hip width 28 cm.

I picked the joint set deliberately: hip pitch and roll, knee pitch, ankle pitch, shoulder pitch and roll, upper-arm yaw, elbow pitch, bilateral, eight per side. The big omission is hip yaw. Adding it would let the robot step out of line with its torso the way the Unitree H1 does, which would clean up the gait. It also adds two more actuators, two more wiring runs, and two more failure modes. With cost and buildability as the binding constraint, it stayed off the BoM. If I do a V2, hip yaw is the first thing I’d add. There’s no head and no waist joint either; both are deferred. The robot doesn’t need either to walk, and a head can be designed once the rest of the body is real.

Target compute is a Jetson Nano on board, which limits how big the policy network can be at deployment.

Mechanical Design

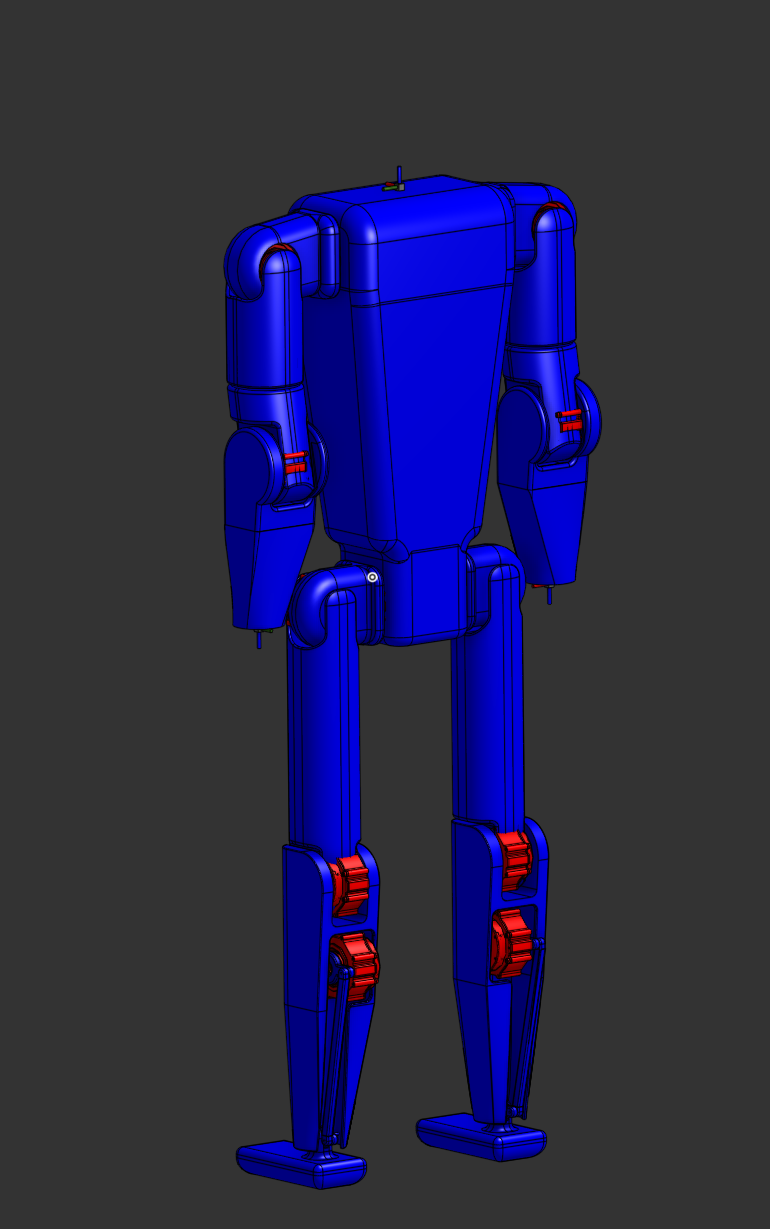

The whole frame is intended to be machined aluminum, assembled in a clamshell scheme. Every link splits into two halves along its long axis, with the actuator and wiring sandwiched between them. The structure is the cover: there’s no separate skin to design, internal cabling has a path that gets closed up when the halves come together, and any joint can be opened by pulling four screws and a clamshell half. That kind of access matters when something needs replacing in the field.

The ankle was the worst joint to translate from CAD to URDF. Originally I drew it as a four-bar linkage with the motor mounted up the shin, which keeps mass off the foot and gives a nice mechanical advantage. The closed kinematic loop doesn’t survive the trip into URDF and Isaac Lab cleanly though, since URDF assumes a tree topology, and the workarounds are all leaky. I ended up converting the joint to a true parallel linkage with a 1:1 drive ratio between motor and ankle so the simulation could treat it as a single revolute joint and stay honest about the dynamics. A shorter ankle-side link with a longer motor-side link would give more usable range and is what I’d pick if I were rebuilding the joint, but the 1:1 version was the fastest way to a sim that wasn’t lying.

For actuators I went with the RobStride RS03 family across all 16 joints. Quasi-direct-drive, 60 Nm peak, 20 Nm continuous, 9:1 reduction. I started looking at this category of actuator while researching K-Scale, ended up evaluating the RobStride lineup directly, and the RS03 was the smallest single-SKU option that covered the worst-case torques (ankle, knee, hip abduction). I made the call deliberately to use one SKU everywhere even though it meant slight oversizing at the elbows and shoulders. The benefits compound: one wiring harness pinout, one CAN address scheme, one spare on the shelf, one set of firmware and tuning parameters to maintain. With the robot being a one-person project, that simplification pays back faster than the marginal weight savings of mixing in a smaller actuator at the lighter joints.

CAD → Simulation Pipeline

CAD lives in OnShape. A pipeline built on onshape-robotics-toolkit exports the assembly to URDF and pulls STL meshes directly from the OnShape API. Four post-processing steps run after each export:

- Clean. Strips OnShape reference frames from the URDF.

- Joint limits. Applies per-joint limits from a YAML config.

- Hip centering. Corrects the root body origin to sit between the hips. OnShape places it at one hip joint by default.

- Foot collision. Replaces curved STL foot meshes with flat 18×7×3 cm box primitives.

The foot fix turned out to matter more than I expected. PhysX generates line contact on curved surfaces no matter what friction coefficient you set, so the robot just slips. A flat box gives proper 2D patch contact and kills off a whole class of failed gaits.

A pain point, more practical than technical: OnShape rate-limits API calls aggressively on the free tier, and an export pulls dozens of meshes per pass. A few failed exports in a row will lock me out for the rest of the day, which is annoying when the failure is something I could fix in five minutes if I could just iterate.

For sim-to-real fidelity, I want to characterize the actuators properly before pushing onto hardware: rotor inertia, friction (Coulomb plus viscous), backlash, motor constants under load, and how those vary with temperature. The sim already uses an armature = 0.005 kg·m² reflected-rotor estimate, but a measured number per actuator is the right input. Once I have those distributions I can use them as the centerline for domain randomization rather than guessing the spread.

RL Training

Training uses NVIDIA Isaac Lab with RSL-RL (PPO). The full observation is 247-dimensional once the height scanner is on: proprioception (joint angles, velocities, base linear/angular velocity, gravity vector, last action) plus a 17×11 height-scan grid centered on the robot. The rear 8 columns of the grid are zeroed out so the policy only sees the 9 forward columns the D435i can actually fill on hardware.

How the reward function evolved

The first attempts at the reward function were closer to a kitchen-sink approach. 17 active terms, with explicit knee-singularity penalties, base-height tracking, foot-clearance shaping, and a torque cost on top of the standard tracking and survival rewards. The robot would converge to something, usually not a great something, and tuning any one weight would knock another behavior over.

The simplification was to walk over to the Unitree H1 reference config and copy its reward structure mostly verbatim, then adjust only where Atom’s geometry forced a change. That dropped the active term count from 17 to 11 and converged faster and cleaner. The compromises I had to make against the H1 baseline were:

action_rate_l2 = -0.005(the H1 value) blew up around iteration 1700 every time. Atom doesn’t have hip yaw, so stance-to-swing transitions are inherently jerkier than H1’s, and the same penalty weight drives gradient explosion. Dropping to-0.001is stable.- Added

feet_contact_at_rest(-0.5) to penalize lifting the feet when commanded velocity is below 0.05 m/s. H1 doesn’t need this; combining a stepping reward and a no-stepping penalty in one policy creates a structural tension I’ll come back to below. - Added

dof_pos_limitson knees as well as ankles, since the policy was happy to clip against the joint limits as a control strategy. - Termination on leg contact. Without it, the policy discovered it could survive an episode by sitting down on its femurs (more on this two paragraphs from now).

There are still 11 weighted reward terms in the current config: linear and angular velocity tracking, feet-air-time, feet-slide, flat-orientation, angular-velocity penalty, joint-deviation on arms and hip-roll, position-limit penalties, action-rate, joint-acceleration, and the termination penalty. Most of the project’s training time has gone into picking weights for these.

The neural net got bigger when terrain came in

The flat-terrain policy trains on 60 observations and a [128, 128, 128] MLP. When I turned on the height scanner for rough terrain, the observation jumped to 247, and a [128, 128, 128] net was visibly under-capacity. Reward stalled, training plateaued. I rebuilt the actor and critic at [512, 256, 128]. That bumped throughput cost a bit but was a flat improvement in convergence on rough terrain. One incidental gotcha: the layer shapes are different, so flat-terrain checkpoints don’t load into the rough-terrain network. RSL-RL doesn’t error on the mismatch, it just silently initializes the new network from scratch. I lost about a day to that before noticing the actor weights were random.

Reward hacking is the default outcome

A recurring story across the run log is “training looked great, then I watched a checkpoint.” On rough terrain in particular, the policy would happily settle on strategies that maximized the reward signal without doing any of the things I actually wanted. The classic was sitting on its femurs or its elbows: contact rewards stay clean, the orientation cost stays low, and the termination doesn’t fire because the base height is fine. Without watching the checkpoint you couldn’t tell from the curves. Some tells did show up if you looked at the right metric, like a survival rate that crept toward 100% while linear velocity tracking stayed stuck near zero, but the only reliable check was to render the robot in playback every few hundred iterations and trust your eyes.

A leg-contact termination at 200 N closed the femur-sitting loophole. The general lesson is to treat training metrics as necessary, not sufficient: a checkpoint isn’t doing what I think it’s doing until I’ve seen it run.

Run 50 on rough terrain is the canonical example. Mean reward had climbed to +19.3, the first time it had ever gone positive on rough terrain, and the survival rate looked like a real result. Then I watched a checkpoint:

The robots aren’t walking. They’re locked in a deep squat and shuffling forward across the terrain in a half-crouch. It’s an interesting strategy. The low center of mass keeps survival rates up on bumpy ground because there isn’t far to fall, and the small steps avoid the failure modes that come with full swing-phase commitment. It scores decently against the reward signal. It would not get the robot up a flight of stairs. The whole point of the rough-terrain training was the staircase eventually, and this gait can’t do it.

Earlier in this same run the policy was even more degenerate. The dominant strategy for a stretch of training was to park on the femurs and ride out the episode without taking a step. You can still catch a flash of it in the final checkpoint:

The +19.3 mean reward was never about locomotion. It was about the policy finding a low-effort equilibrium the reward function didn’t punish.

Adding the leg-contact termination at 200 N closed the femur-sitting version. The next run produced straight-legged shuffling. The one after that produced bang-bang torque commands. Each fix has surfaced a new exploit within hours.

Other failures worth naming

A few non-obvious failure modes that cost real time:

- Ankle dorsiflexion was the hidden constraint preventing knee bend. A 30° ankle limit makes any stance needing more than 30° dorsiflexion physically impossible, so every policy converged to a straight-leg gait regardless of what the reward function said about it. Raising the limit to 45° in the URDF was the unlock.

- Spawn pose matters more than I expected. A 70° knee bend at spawn produces a wide sumo-style gait, since from a deep squat it’s easier to swing the leg around the stance leg than forward. 29° converges to a straight-leg waddle. 50° is the current sweet spot. This is also why so many internet humanoids walk crouched: the policy learns that staying tall is more stable, so without a bias toward bent knees at init it just won’t use them.

- Isaac Lab’s

root_height_below_minimumuses world Z, not height above terrain, so it incorrectly terminates the robot on sloped ground. This was masquerading as “rough terrain training is unstable” until I read the source. --load_runis silently ignored without--resume. For about 30 runs my improvements were coming from config changes alone, not weight transfer. A real:facepalm:moment when I caught it.

Early flat-terrain wins

Before the rough-terrain push, the flat-terrain policy went through several weeks of iteration that produced a few visibly-stable baselines worth naming. Run 2 was the first clean walking result, after fixing a coordinate-frame bug in the URDF that had the velocity arrow pointing backward: forward motion, no lateral shuffle, hip-dominant straight-leg gait at 97% survival. Phase 2.5b (Run 8) added a feet_contact_at_rest penalty so the policy could be commanded to stand. The trick was extending the velocity command range gradually, since jumping straight from (0.0, 1.0) blew the policy up the first time. Run 10 consolidated 2.5b at 5120 environments and 1500 iterations, mean reward 29.2, the best flat-terrain result of the early phases.

These checkpoints don’t replay under the current network architecture (the rough-terrain rebuild grew the actor MLP and added height-scan observations the original policies don’t know about), so I’m not embedding clips of them here.

Splitting the policy

The original plan was a single policy that handled forward walking, backward walking, standing in place, and stair traversal. One network, one training run, one set of weights. That didn’t work. The optimizer kept landing in compromise gaits that weren’t good at any one thing.

The clearest example was standing. feet_air_time rewards lifting the feet during stepping, and feet_contact_at_rest penalizes lifting them while standing still. At zero commanded velocity those two terms are equal and opposite, and the policy finds equilibrium by marching just enough to balance them. No weight tuning fixes that, since it’s a structural conflict in the reward.

The fix was to deploy multiple specialized policies and switch between them at runtime based on the commanded velocity magnitude, with the threshold at 0.05 m/s. The same kind of split happens at the terrain boundary: the rough-terrain and stair policies want different reward weights and different network capacity than the flat-terrain policy, and forcing them to share would just compromise both.

This works, and it’s what most humanoid stacks I respect end up doing, but it’s a concession. A single end-to-end policy that handled everything would be cleaner. Even with the splits, the gaits aren’t pretty. There’s a stiffness to the arms, a slight stutter at speed transitions, an overall sense that the robot is doing the locomotion-shaped task rather than walking.

Two contrast points from the spawn-pose study after the ankle limit was raised to 45°. Run 57 launches from a 70° knee bend, and you can see the sumo gait the deep spawn produces in the first second of the clip. What’s striking is what happens next: the policy doesn’t stay there. The robots stand up out of the squat over the course of the episode and slide into a straight-legged walk. The deep spawn was meant to bias exploration toward bent-knee walking, but the optimizer found that the easier path is to leave the squat behind as soon as it stops being free.

Run 58 backs the spawn off to a 50° knee bend and uses the H1-matched reward set throughout. Mean reward 30.1, 87.7% survival. This is the most stable flat-terrain result so far. The gait is straight-legged with a noticeable hip waddle, clearly not what I want to put under real metal, but the robots walk at speed for the full episode without falling apart, which earlier policies couldn’t manage. It’s the current baseline, and the one I’ll fine-tune the smoother-action and torque-envelope rewards from next.

Stair traversal in particular hasn’t reached “I’d put hardware under it” quality yet. The policy can negotiate single steps reliably and short flights with mixed success, but a full staircase still trips it more often than I’d like.

Hardware Analysis in the Loop

A custom log_hardware.py script captures joint torques, contact forces, and PD saturation stats during play sessions so I can sanity-check actuator sizing. The aim is a per-joint torque envelope (peak, RMS, and duty cycle) all kept inside the RS03’s published 60 Nm peak and 20 Nm continuous numbers. On hardware, exceeding continuous torque for any length of time is how you burn out a motor.

This turned out to be hard to achieve. PPO with default reward weights does not care about torque, only about reward, and the policy will happily ask for whatever torque produces the next reward increment. Early analysis caught elbow joints holding against mechanical stops at around 180 Nm RMS, far above rated. That was a reward-function artifact: action_rate_l2 penalizes velocity but not magnitude, so the policy was using the PD controllers as torque sources. The same pattern shows up in subtler ways across the rest of the body, with quick command swings briefly demanding peak torque several times per stride. Fine on paper, cooks the motor in practice.

The first thing I tried was clamping the action space and the simulated motor model to the real torque limits, so the policy literally could not output something the hardware couldn’t deliver. That made training noticeably harder. Random exploration at initialization spends most of its time saturated against the limits, gradient signal is weak when the controller is saturated for long stretches, and the policy struggled to find a useful gait. So I switched to a two-stage approach: train the early policies with effectively unlimited torque so they can find a stable gait quickly, then load a good checkpoint and fine-tune with progressively tighter regularization (action-rate, action-magnitude, and joint-torque penalties) plus envelope-tracking rewards that explicitly favor smoother and smaller commands. It’s a curriculum: let the optimizer find the manifold of “walking” cheaply, then push that manifold toward “walking inside the motor envelope.”

It works, but it isn’t done. The current Run 58 checkpoint walks; it doesn’t yet walk inside the envelope I want for hardware. That’s the next round of training.

Catching all of this in sim before bending real shafts is exactly why the sim work has to come first.

What’s next

The gaits I have today aren’t ones I’d put under hardware. The most stable flat policy walks the full episode but with locked knees and a clear hip waddle, the rough-terrain policies are nowhere near deployable, and the stair work has barely started. Real progress on Atom right now is more sim time, not more CAD or more soldering. A few specific directions:

- Reference motion data as a behavior prior. I’m increasingly convinced PPO from a flat reward landscape isn’t going to find natural human-like walking from this geometry on its own. The plan is to feed the optimizer a worked example, like human walking captures or animation library cycles, and either use it as an imitation signal alongside the existing rewards or pre-train against it. The open question is sourcing: motion-capture databases retargeted to a 16-DoF body, animation cycles from open game assets, or my own hand-keyframed cycles in Blender are all candidates.

- Smoother-action and torque-envelope rewards on top of Run 58. Fine-tune the current best checkpoint with progressively tighter regularization until the per-joint torques stay inside the RS03 continuous rating during steady walking.

- Velocity estimator. True base linear velocity isn’t observable on hardware, so a small RNN that maps proprioceptive history to velocity needs to be trained alongside the policy. The estimator-policy pair has its own training loop.

- Terrain height estimator from D435i depth. A CNN trained in sim that maps a depth image to the 187-element height-scan vector the policy expects. Extreme-Parkour style.

- Actuator characterization. Bench-test rotor inertia, friction, and motor constants on real RS03s, then re-run the worst training cases with measured values and tighter domain randomization.

- Hip yaw, eventually. If a V2 ever happens, two more actuators per leg for hip yaw is the highest-value DoF I left out.

- Build the thing. Aluminum clamshell parts, the BoM exists, this is mostly machining time and money. Not until the gait is good.